Uw bericht is verzonden.

We verwerken je aanvraag en nemen zo snel mogelijk contact met je op.

Het formulier is succesvol verzonden.

Meer informatie vindt u in uw mailbox.

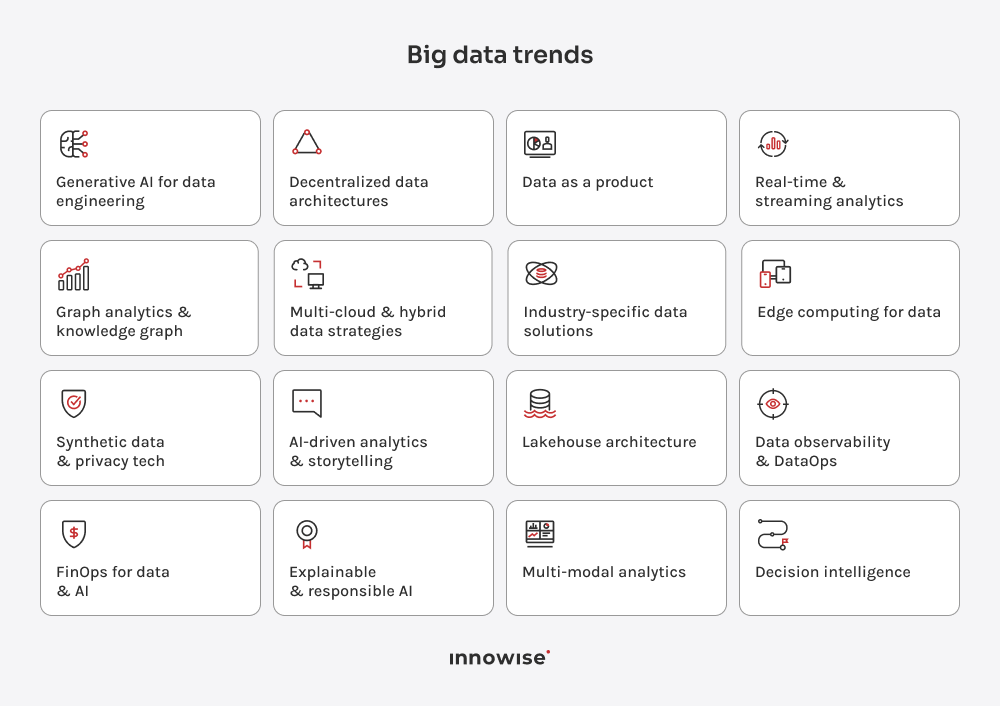

Let me kick things off with a bold statement: 2026 is the year of reckoning for the big data industry. We’ve spent the past decade experimenting with every shiny new tech under the sun: AI, IoT, cloud platforms, and all those buzzwords. But guess what? It’s time to put up or you’ll miss the boat. If your company isn’t already figuring out how to turn that massive flood of data into something usable, you’re going to get left in the dust.

2026 is the time to make these tools work for you and stay ahead of the curve. Curious about which trends to watch? Let’s dive in.

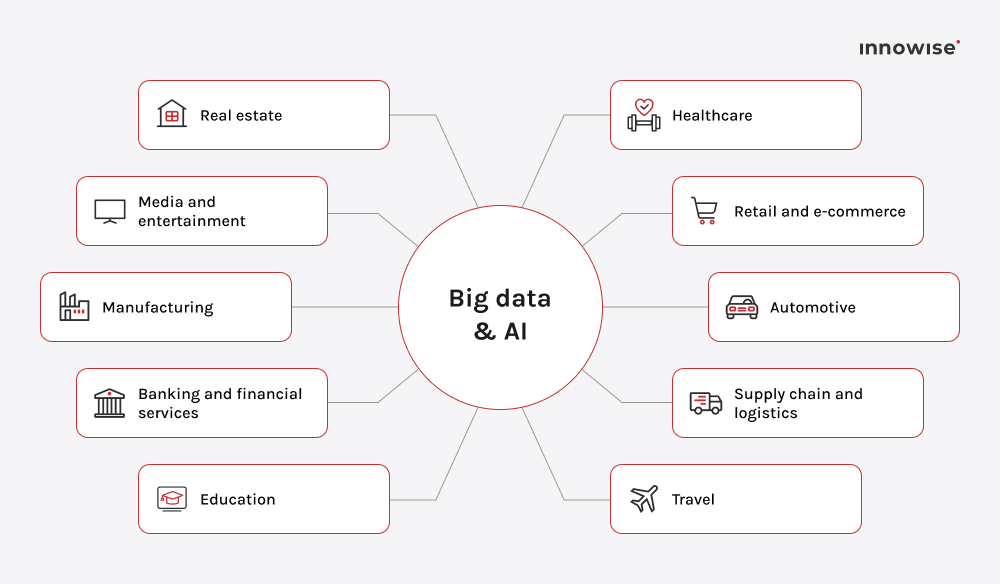

In 2026, big data becomes a key driver of business value, and you’ll find it impacting every industry out there. From AI-driven analytics copilots to real-time edge processing, these trends define the big data future that’s already unfolding. They will shape your business success, so read this piece till the end.

One of the most impactful future trends in big data analytics is the rise of Generative AI. While it’s not perfect yet, GenAI is already tackling the most time-consuming and tedious parts of data engineering. AI won’t eliminate data quality challenges entirely, but it can significantly reduce the hours your team spends on data preparation.

AI is now being embedded into data pipelines, capable of automating tasks like data cleaning, filling missing gaps (imputation), and transforming data. This means you’ll have clean, ready-to-use data at a fraction of the time. For instance, platforms like Databricks en Snowflake already include built‑in functionality for generative‑AI‑enabled pipelines. It helps organisations automate data transformation, gap‑filling, and delivery of AI‑ready datasets.

Pro tip:

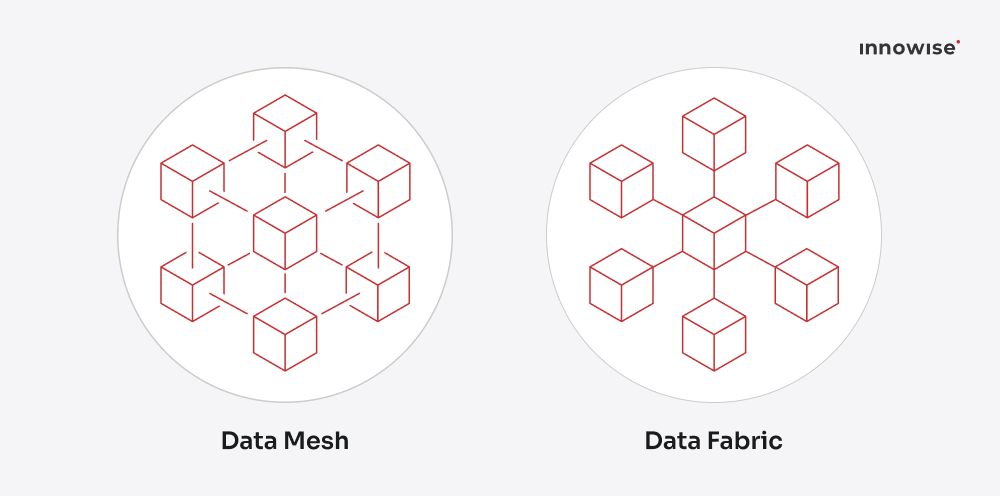

Relying on outdated data architectures will hold you back. The key to staying competitive is adopting Data Mesh and Data Fabric.

Data Mesh decentralizes data ownership, letting domain teams manage and serve their own data, cutting out the bottleneck of central IT. Data Fabric connects all data sources (cloud, on-prem, edge) into a cohesive system with automated metadata, lineage, and integration. Together, they create a scalable, flexible architecture that enables agility without sacrificing control.

The growth of the Data Mesh market (naar verwachting $5.09 billion by 2032) shows how quickly businesses are shifting toward decentralized data models.

Pro tip:

While Data Mesh defines the architecture for decentralization, it works best when combined with a “data-as-a-product” mindset, where each dataset is owned, documented, and managed like a real product.

Data Mesh gives you the structure. Data as a Product gives you discipline. In 2026, smart companies don’t just decentralize data, they manage it like a product, with clear ownership, documentation, and measurable value. While many companies are still working to centralize their data, the trend is rapidly moving away from data buried in random spreadsheets or isolated databases. In a data-as-a-product mindset, every dataset has documentation, role assignment, service-level agreements, and a feedback loop for improvement.

This way, marketing knows where their campaign data lives. Finance trusts the revenue numbers without needing a “data reconciliation day.” And engineering finally stops acting as the bottleneck between everyone else’s dashboards.

Platformen zoals Snowflake Data Cloud en Databricks Marketplace already help teams publish, share, and even monetize data products internally or with partners. That opens new doors for collaboration and new revenue streams. Especially when your “data product” becomes something others want to buy or build on.

Pro tip:

"At Innowise, we always make sure data works for you in a way that’s practical and efficient. Our approach integrates AI, automates data workflows, and enables real-time insights, so your team isn’t bogged down by complexity. You get clean, actionable data when you need it, so you can make decisions based on facts."

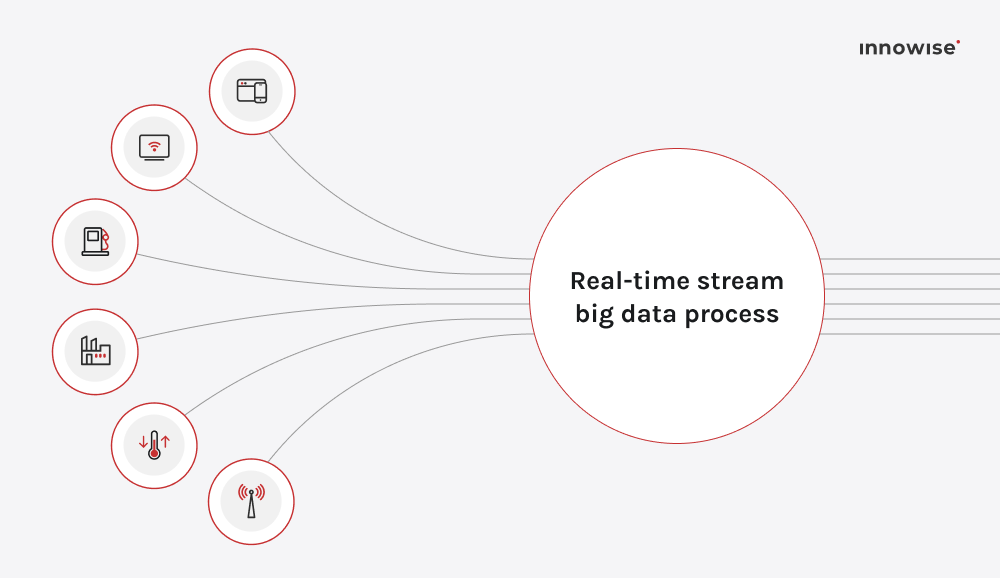

The next on the list of future trends in big data is real‑time analytics. While the concept has been under development for years, by 2026, it will be rapidly evolving from a competitive advantage into a core necessity for organisations that demand instantaneous insights. When you process data the moment it arrives, instead of waiting for batches, you unlock the ability to act on events, signals, and patterns as they happen. For the big‑data arena, this means streaming high‑volume data sources (IoT sensors, user interactions, logs) through pipelines that analyse and respond within seconds or milliseconds.

The market backs this shift. The global streaming analytics sector was valued at $23.4 billion in 2023 and is projected to grow to about $ 128.4 billion by 2030, at a CAGR of roughly 28.3% between 2024 and 2030. Industries like finance, telecoms, manufacturing, and retail are already using stream‑based models for fraud detection, dynamic pricing, predictive maintenance, and customer experience optimization.

Pro tip:

If your data strategy still treats real‑time as an “extra” and focuses heavily on batch first, 2026 will highlight the gap, believe me.

Graph analytics steps into the spotlight in 2026, not as a new technology, but because its adoption is being rapidly accelerated by AI integration. Instead of treating data solely as rows and columns, organisations use graphs to understand how entities connect: customers, products, sensor nodes, fraud rings, you name it. Knowledge graphs en graph databases make this possible: they map complex relationships and expose insights traditional methods struggle to reveal. For example, a recent graph database report by Verified Market Reports explains that graph databases are now critical for real‑time processing, semantic relationships, and AI‑driven anomaly detection.

For business leaders, the key benefit is this: you uncover waarom things happen, not just dat they happen. In fraud detection, you spot the network of actors; in recommendation, you map hidden affinities; in IoT, you trace chains of failure. That power brings deeper insight, faster detection, en more strategic action.

Pro tip:

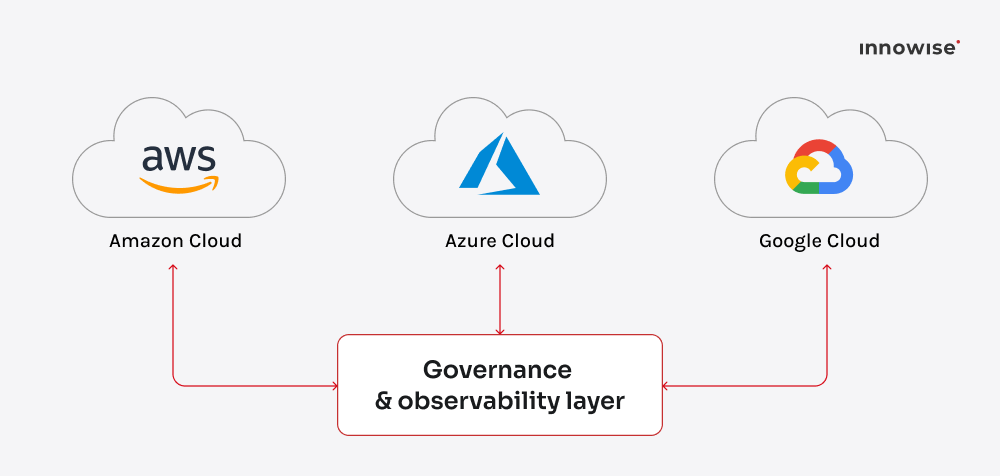

In 2026, relying solely on a single cloud provider is increasingly seen as a risk, much like putting all your investment into a single stock. While many organizations still primarily use one platform, the most strategically advanced companies now play the multi-cloud game. They balance services from AWS, Azure, and Google Cloud to avoid lock-in and squeeze the best performance-to-cost ratio out of each.

Hybrid setups are rising, too. This is where organizations combine cloud services with their existing on-premises data centers. Reasons for this hybrid approach go deeper than just keeping sensitive data on-prem include:

The catch? Complexity. Spreading workloads across clouds introduces more moving parts: different APIs, billing systems, and governance rules. The winners are those who automate the orchestration and monitoring layer. Think cross-cloud query engines, unified identity management, and observability tools that track latency and cost in real time.

Pro tip:

Generic data platforms are great. Until they start solving nothing in particular. This is why, in 2026, companies in super-competitive, high-risk, or regulated fields are done with generic tools. They want industry-tuned solutions that speak their language, handle their regulations, and deliver results instead of dashboards that look impressive and mean little.

So, why are companies suddenly demanding these specialized solutions in 2026? It really boils down to three big things:

Healthcare teams want predictive analytics that help doctors spot patient risks before they escalate. Banks care about fraud detection, risk scoring, and hyper-personalized offers. Manufacturers track equipment health and supply chain visibility down to the minute. And retailers are blending transaction data with in-store sensors and social trends to forecast demand without staring into spreadsheets.

That’s why the market is shifting toward domain-specific data products: pre-built models, connectors, and compliance frameworks that fit straight into real workflows. This specialization is already demonstrating massive growth in vertical markets. For instance, according to Visiongain, the healthcare analytics market alone will reach $101 billion by 2031, driven by this kind of specialization.

Pro tip:

In 2026, the demand for immediate, automated action is paramount. Though the cloud is still vital, companies are realizing it costs too much time and money to send every single byte of data to a distant server for processing.

Edge computing is the fix. It brings data processing closer to where it’s generated: sensors, machines, devices, even cars. Instead of pushing terabytes across the network, you process the important stuff locally and act instantly.

Why is this trend exploding nu?

This matters most for industries where speed is life: smart factories that adjust production lines on the fly, hospitals that monitor patients in real time, or retail chains that manage pricing dynamically based on local demand. And the money backs it up: IDC forecasts global spending on edge computing solutions to grow at ~13.8% CAGR and reach nearly $380 billion by 2028.

The smartest organizations aren’t replacing the cloud; they’re completing it. They use a hybrid setup: local processing for speed, cloud storage for scale. The result is beautiful: lower latency, reduced bandwidth costs, and faster decisions that actually move the needle.

Pro tip:

In 2026, access to real-world data is trickier than ever: privacy laws are stricter, regulators are watchful, and users are far less forgiving. That’s why we need synthetic data.

The trend is exploding now because the GenAI boom has finally made synthetic data high-quality enough to reliably mimic complex, real-world information. Companies are increasingly relying on this artificial, statistically accurate data to train massive AI models faster and cheaper than traditional methods, all while automatically satisfying tough compliance needs like GDPR and the EU’s AI Act.

Synthetic data tools are everywhere: from finance firms training fraud detection models to healthcare companies running AI diagnostics without exposing patient data. Gartner verwacht that by 2030, synthetic data will surpass real data in AI training, because it’s safer, faster, and easier to scale.

Pro tip:

In 2026, analytics finally feels human. AI copilots and narrative visualization tools now turn data into clear stories instead of endless charts. Tools like Power BI Copilot, Tableau GPT, camelAI, and Looker’s GenAI layer can query, summarize, and explain insights in plain language.

Think of them as your data analyst. You can ask, “How did revenue trend this quarter?” or “Which campaign brought the highest ROI?” and get instant answers in plain language. Tools like Power BI Copilot, Tableau GPT, en camelAI already do this, connecting large language models directly to your company’s data.

Pro tip:

In 2026, the line between data lakes and warehouses has blurred. The new standard is lakehouse architecture, which is a hybrid model that combines the scalability of data lakes with the structure and performance of warehouses. You can store unstructured data, query it with SQL, and run machine learning workloads. All in one place. Without juggling ten different platforms.

Vendors like Databricks, Snowflake, en Google BigQuery are leading the charge here.

Pro tip:

In 2026, managing data pipelines without observability is like flying a plane with the dashboard turned off. You might move fast, but you have no idea what’s breaking. Data observability is how teams get visibility into the health, freshness, and reliability of their data. It tells you when something’s wrong, why it happened, and how to fix it before dashboards start showing nonsense.

So, why is this essential nu? Because you can’t have governance or compliance without it.

This goes hand-in-hand with DataOps, which automates things like testing and deployment. Together, observability and DataOps give you a reliable, compliant, and rock-solid data backbone with fewer surprises and faster recovery times.

Pro tip:

Have cloud bills ever kept you awake at night? As data volumes explode and AI workloads multiply, FinOps (financial operations for cloud and data) becomes essential. The goal is simple: understand where every dollar in your data ecosystem goes and make sure it’s actually buying business value, not just bigger servers.

Training large models, storing petabytes of data, and running endless queries can drain budgets fast. FinOps teams now use analytics and automation to track costs in real time, spot inefficiencies, and forecast usage across departments. Cloud providers even offer native tools for this (AWS Cost Explorer, Google Cloud Billing, Azure Cost Management), but the real gains come from integrating financial metrics directly into your data workflows.

Pro tip:

In 2026, AI runs so much of business that “just trust the model” doesn’t fly anymore. Boards, regulators, and customers all expect transparency. They want to know waarom an algorithm made a decision, not just the result. That’s why Explainable AI (XAI) en Responsible AI are gaining traction. Together, they make machine learning less of a black box and more of a system you can govern.

Banks already use explainable models to justify credit decisions to auditors. Healthcare providers rely on them to show how diagnostic algorithms reach conclusions. Even HR systems are under scrutiny to prove fairness in hiring recommendations. When decisions affect people or profits, blind faith in AI isn’t strategy; it’s a risk.

Pro tip:

By 2026, big data development will move beyond tables and dashboards into a new era of multi-modal analytics. Here, text, images, video, and sensor data combine to create a complete, context-rich picture. Instead of analyzing customer feedback and sales numbers separately, teams can now correlate call transcripts, product photos, and user behavior in a single workspace.

Sounds like sci-fi, right? But platforms like Databricks MosaicML, Anthropic’s Claude for data, en OpenAI’s GPT-4 Turbo with vision already handle multi-format data inputs. The result is cool. Context-rich insights feel almost intuitive. Imagine predicting equipment failures by cross-analyzing vibration logs, thermal images, and maintenance notes. That’s what multi-modal analysis enables.

Pro tip:

And the last on the list of key trends in big data is decision intelligence (DI). It blends data science, psychology, and business logic to help organizations make smarter calls faster. Instead of throwing a hundred metrics at you, DI systems model how choices lead to outcomes, then simulate scenarios before you commit.

Think of it as analytics that answers “what happens if we actually do this?”, not just “what happened last quarter?” Retailers use it to test pricing strategies before rollout. Banks use it to simulate risk exposure across portfolios. Even HR teams use DI to predict hiring and retention impact before policies go live.

The market evidences this shift: the global decision intelligence market was estimated at $15.22 billion in 2024 en zal naar verwachting $36.34 billion by 2030, growing at about a 15.4% CAGR.

Pro tip:

So, what is the future of big data? 2026 brings a new level of maturity to it. The focus now is on choosing the tools and methods that actually create impact. Businesses that connect technology with clear goals will see faster growth and stronger results.

Use AI where it saves time and improves accuracy. Build a data mesh that helps teams work together instead of in silos. Invest in real-time analytics that help you act at the right moment, not after the fact.

The leaders of this year understand one thing: value comes from applying data with purpose. Pick what fits your strategy, make it work across teams, and let data become the engine that drives every smart move you make.

Hoofd Big Data en AI

Philip brengt scherpe focus aan in alles wat met data en AI te maken heeft. Hij is degene die in een vroeg stadium de juiste vragen stelt, een sterke technische visie bepaalt en ervoor zorgt dat we niet alleen slimme systemen bouwen, maar ook de juiste, voor echte bedrijfswaarde.

Uw bericht is verzonden.

We verwerken je aanvraag en nemen zo snel mogelijk contact met je op.

Door u aan te melden gaat u akkoord met onze Privacybeleidmet inbegrip van het gebruik van cookies en de overdracht van uw persoonlijke gegevens.