Your message has been sent.

We’ll process your request and contact you back as soon as possible.

The form has been successfully submitted.

Please find further information in your mailbox.

AI in 2026 feels less like “wow” and more like “okay, who owns this in production?” A year or two ago, people wanted a chatbot because everyone else had one. Now they want something that saves time, cuts mistakes, or helps staff stop answering the same question 200 times a day.

Here’s the blunt truth. AI keeps getting cheaper to try and more expensive to run well. Anyone can spin up a model and get a decent prototype. Then reality hits: bad data, weird edge cases, legal questions, security reviews, latency, and the awkward moment when the model confidently makes something up in front of a customer.

So what are the latest developments in artificial intelligence that actually matter for business? The ones that survive contact with the real world:

Scroll on to learn more!

If you’re planning something serious this year, start with a scoped AI consulting effort. Of course, it is NOT magical. But it’s cheaper than building the wrong thing, then pretending it was “a learning project”.

AI started as a simple question: “Can a machine think?” and then it turned into a pile of math, data, GPUs, and deadlines. Alan Turing framed that question in his 1950 paper and proposed what we now call the imitation game (the Turing test).

Not long after, the field got its name. The Dartmouth proposal (written in 1955 for a summer 1956 workshop) basically said: let’s treat “intelligence” like an engineering problem and see how far we get. Bold plan. It worked, just slower than the hype cycles wanted.

From there, AI kept bouncing between big promises and genuine progress. A few milestones explain why 2026 looks the way it does:

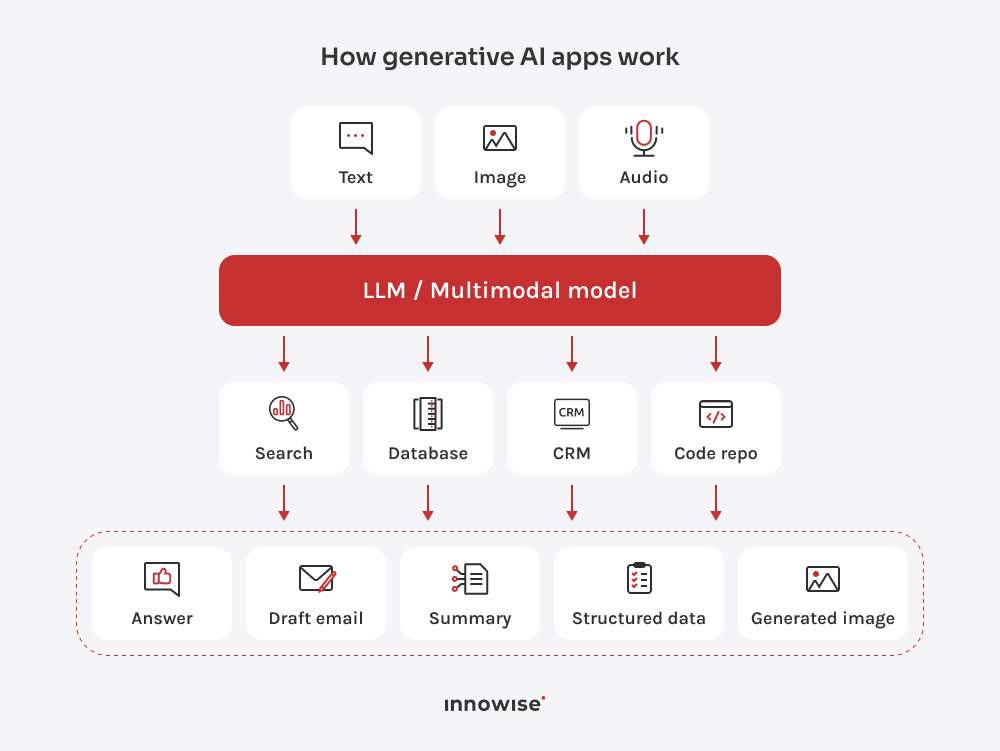

Now, the big buckets of AI you keep hearing about make more sense:

Agentic AI means you give a system a goal, and it handles the steps. Such software can plan, invoke tools, check results, and try again when something fails.

Why it matters in 2026: Companies feel buried in workflows. Tickets bounce between teams. People copy-paste between apps. Someone always forgets a step. Agent-style systems attack that mess.

Here’s what I see working in real life (and what breaks if you don’t design it right):

But be warned: agentic systems can also become very confident chaos generators if you let them run loose. The fix is dull, but that’s good. Give the agent limited permissions, log everything, and force checkpoints. If it can spend money, change data, or contact customers, it needs a gate.

If you want to build this the same way, this is exactly what we do in our AI agents development work: define the allowed actions, wire the agent to your tools, and set guardrails so it helps your team instead of creating a new class of incidents.

The unglamorous reality is that the biggest gains come from narrow, high-volume tasks: support replies, sales follow-ups, document drafting, internal Q&A, and “turn this mess of notes into something a human can read”. If you want this built into a product or an internal workflow, this sits right in our generative AI development and AI chatbot development work.

This one feels like paperwork because it is paperwork. But it’s also the reason AI projects survive security review, legal review, procurement, and the first upset customer.

What changes in 2026:

Governance feels annoying until the day it saves you. And that day always comes.

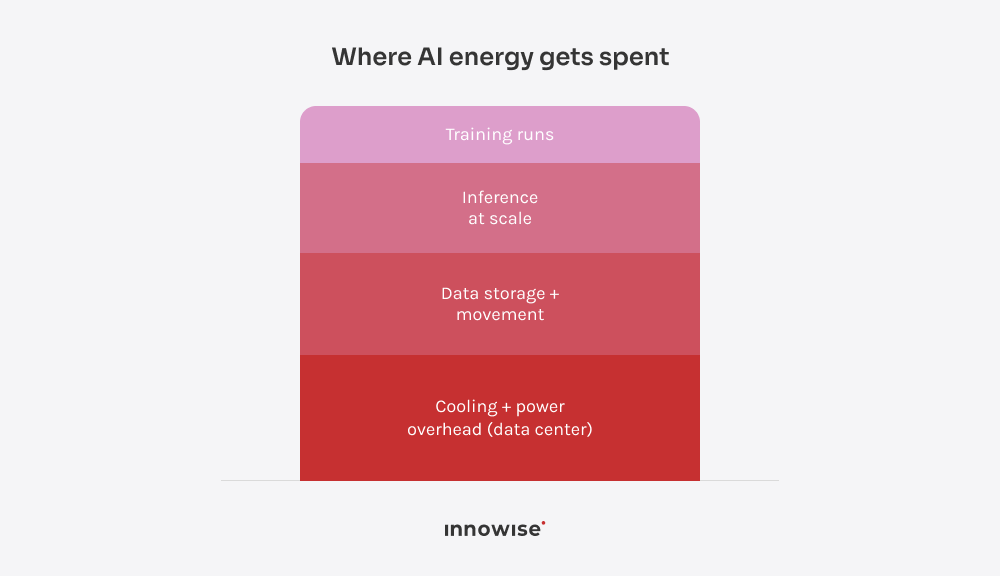

This trend exists because AI eats power, and power is not free. In some regions, it’s also a political headache now, not just a line item. The IEA has been pretty direct about AI driving electricity demand growth from data centers.

What this looks like in 2026:

This is one of the biggest AI industry trends for 2026: companies stop buying generic AI and start building narrow systems that live inside real workflows. Not a demo tab. Not a chatbot that answers and then shrugs. A tool that does part of the job.

Here’s what this looks like when it’s done right:

My honest take: the “best” use case is usually the one that happens a lot and hurts a little every time. If it happens twice a month, AI won’t save you. It’ll just become another thing to maintain.

If you want to turn these latest advancements in AI into a working feature inside your ERP/CRM/WMS/EHR stack, that’s where AI development pays off — because integration is the whole job, not the last step.

AI is now part of the security problem and part of the security stack. Attackers use it to scale scams. Defenders use it to spot weird behavior faster. And if you build AI apps, you also need to defend the model itself from people trying to mess with it. NIST has even published a full taxonomy on adversarial ML attacks and mitigations, which tells you this problem is no longer niche.

What this looks like in 2026:

I think, if your AI app can take actions, it’s a security system now. Treat it like one.

Most teams don’t want AI to replace staff. They want it to take the annoying parts of the job and leave the parts that need judgment. If you’ve ever watched a senior specialist spend 40 minutes reformatting someone else’s notes, you already know why this trend sticks.

Here’s where it actually helps:

My honest take: “human–AI collaboration” sounds like a poster on a wall. In practice, it’s two rules — let AI do the first pass, and don’t let it make final calls where mistakes hurt.

If you want a career-proof skill set in 2026, don’t aim to “learn AI”. Aim to build systems that use AI and don’t embarrass you in production.

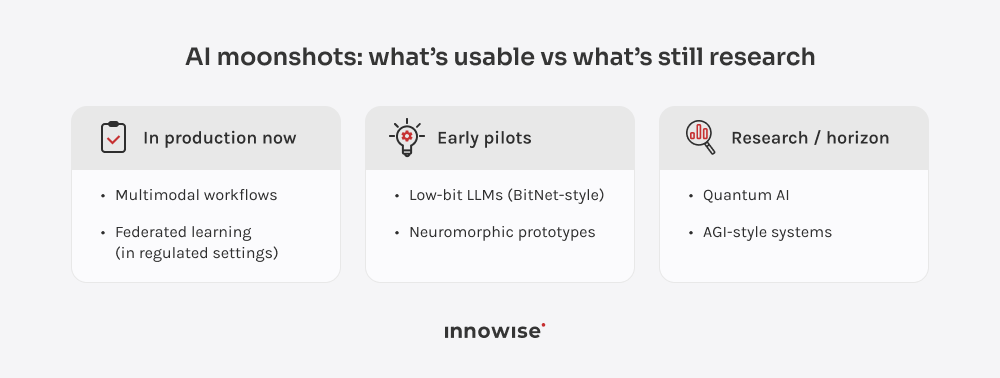

What I’d bet on:

One last thing: continuous learning isn’t optional here. Not because tech moves fast (it does), but because today’s latest AI technology becomes tomorrow’s baseline. The people who stay valuable are the ones who keep building, testing, and shipping (not the ones who collect course certificates like Pokémon).

You think the near future of AI is one big new model drop? Nope! It’s AI showing up everywhere, quietly, inside products and workflows.

Where this is heading (to my mind):

AI trends in 2026 point to one thing: AI is becoming a normal part of software and operations. The flashy phase is fading. The “ship it, run it, govern it” phase is here.

If you’re building with AI this year, the winners won’t be the teams that chase every new AI technology name. They’ll be the teams that pick a few high-volume problems, connect AI to real data and tools, and put guardrails around anything that can hurt customers or the business.

And yeah, you should keep learning. First of all, it’s trendy now. Second, recent advances in artificial intelligence keep turning yesterday’s advantage into today’s baseline.

Your message has been sent.

We’ll process your request and contact you back as soon as possible.

By signing up you agree to our Privacy Policy, including the use of cookies and transfer of your personal information.